Share this

A risk based testing approach for business intelligence reporting solutions

by Dilip Cheerala on 27 March 2013

Business Intelligence reporting solutions are built to report and analyse data from data warehouses. They can vary in size and complexity depending upon the needs of the business, underlying data stores, number of reports and number of users.

Building such a reporting solution isn’t trivial, and testing the solution for release sign off isn’t either. One of the challenges a test manager faces is what to test or more precisely, what not to test? While every report can in reality be tested, it increases the project budget and timeframes.

It is a common pattern in software development projects that the time allocated for adequately testing the solution is never sufficient, as testing happens to be the last phase of the project and the target release dates won’t change to accommodate the required comprehensive testing. So how can the test manager manage testing of the reporting solution with reasonable confidence and within the given timeframes?

One answer to this problem is to use the “Risk Based Testing” technique to assess and prioritise the test scope based on the risk from system, reporting and business complexities. This allows the test manager to allocate appropriate testing activities to mitigate the identified risks.

This technique has often been applied to software testing and other disciplines. The primary purpose of this technique is to perform effective testing within the limited timeframe and limited resources available for testing by working through the prioritised list in the order of high to low risk scope items.

Identifying the test scope and carrying out the risk assessment are two significant activities that should collectively be performed by the project team members. It is important to include a “cross-section” of the project team to get a good balance of experience and knowledge of the systems and business.

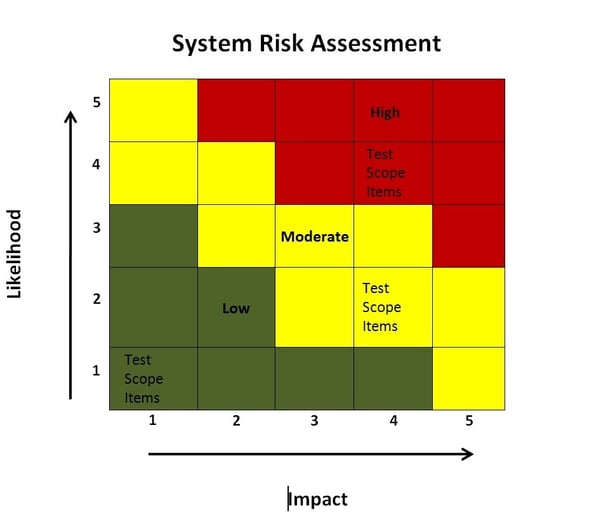

Each individual test scope item is to be assessed from system risk point of view, considering the likelihood of the risk occurring and the impact of the risk in terms of cost, time and quality, and rating them on a scale of 1 to 5. The rating scale of 1 for the likelihood represents least likeliness and 5 represents most likeliness. The rating scale of 1 for impact represents least significant and 5 represents the most significant. Risk exposure is calculated by multiplying the likelihood and impact.

Each report should be assessed for reporting complexity and business risk like reputation and data sensitivity. This information will be used when estimating the required testing effort.

Once the risk exposure assessment is completed, the team categorise the test scope items into high, moderate or low risk groups, and allocate a combination of required testing techniques such as basic unit testing, partial system testing or full user acceptance testing. Each testing type (i.e. unit testing, system testing and user acceptance testing) can be broken down into basic, partial and full testing. This breakdown helps in the estimation of the testing effort.

It is important to perform all the relevant testing (e.g. unit testing, system testing and user acceptance testing). It is also equally important to allocate appropriate level of depth (e.g. basic, partial or full). A broad definition of system testing is being used in this context, and the system testing covers: functional, integration, performance and load testing.

An example of the system risk assessment is shown below.

I have recently used this technique successfully to develop a test strategy for a complex reporting solution for a client. The strategy recommended appropriate allocation of the testing techniques and effort to mitigate the identified risks to achieve a pragmatic outcome for the project.

Share this

- Agile Development (84)

- Software Development (66)

- Scrum (39)

- Business Analysis (28)

- Agile (27)

- Application Lifecycle Management (26)

- Capability Development (20)

- Requirements (20)

- Solution Architecture (19)

- Lean Software Development (17)

- Digital Disruption (16)

- IT Project (15)

- Project Management (15)

- Coaching (14)

- DevOps (14)

- Equinox IT News (12)

- IT Professional (11)

- Knowledge Sharing (10)

- Strategic Planning (10)

- Agile Transformation (9)

- Digital Transformation (9)

- IT Governance (9)

- International Leaders (9)

- People (9)

- IT Consulting (8)

- AI (7)

- Cloud (7)

- MIT Sloan CISR (7)

- ✨ (7)

- Change Management (6)

- Azure DevOps (5)

- Innovation (5)

- Working from Home (5)

- Business Architecture (4)

- Continuous Integration (4)

- Enterprise Analysis (4)

- FinOps (4)

- Client Briefing Events (3)

- Cloud Value Optimisation (3)

- GitHub (3)

- IT Services (3)

- .Net Core (2)

- Business Rules (2)

- Data Visualisation (2)

- Java Development (2)

- Security (2)

- System Performance (2)

- API (1)

- Automation (1)

- Communities of Practice (1)

- Kanban (1)

- Lean Startup (1)

- Microsoft Azure (1)

- Satir Change Model (1)

- Testing (1)

- January 2026 (2)

- November 2025 (1)

- August 2025 (3)

- July 2025 (3)

- March 2025 (1)

- December 2024 (1)

- August 2024 (1)

- February 2024 (3)

- January 2024 (1)

- September 2023 (2)

- July 2023 (3)

- August 2022 (4)

- July 2021 (1)

- March 2021 (1)

- February 2021 (1)

- November 2020 (2)

- July 2020 (1)

- June 2020 (2)

- May 2020 (2)

- March 2020 (3)

- August 2019 (1)

- July 2019 (2)

- June 2019 (1)

- April 2019 (2)

- October 2018 (1)

- August 2018 (1)

- July 2018 (1)

- April 2018 (2)

- January 2018 (1)

- September 2017 (1)

- July 2017 (1)

- February 2017 (1)

- January 2017 (1)

- October 2016 (2)

- September 2016 (1)

- August 2016 (4)

- July 2016 (3)

- June 2016 (3)

- May 2016 (4)

- April 2016 (5)

- March 2016 (1)

- February 2016 (1)

- January 2016 (1)

- December 2015 (5)

- November 2015 (11)

- October 2015 (3)

- September 2015 (1)

- August 2015 (1)

- July 2015 (7)

- June 2015 (7)

- April 2015 (1)

- March 2015 (2)

- February 2015 (2)

- December 2014 (3)

- September 2014 (2)

- July 2014 (1)

- June 2014 (2)

- May 2014 (8)

- April 2014 (1)

- March 2014 (2)

- February 2014 (2)

- November 2013 (1)

- October 2013 (2)

- September 2013 (2)

- August 2013 (2)

- May 2013 (1)

- April 2013 (3)

- March 2013 (2)

- February 2013 (1)

- January 2013 (1)

- November 2012 (1)

- October 2012 (1)

- September 2012 (1)

- July 2012 (2)

- June 2012 (1)

- May 2012 (1)

- November 2011 (2)

- August 2011 (2)

- July 2011 (3)

- June 2011 (4)

- April 2011 (2)

- February 2011 (1)

- January 2011 (2)

- December 2010 (1)

- November 2010 (1)

- October 2010 (1)

- February 2010 (1)

- July 2009 (1)

- October 2008 (1)